Inside NetFoundry docs: Streamline doc freshness with AI

A content orchestrator series for the modern technical writer

At NetFoundry, we're building the foundational architecture for zero-trust networking with OpenZiti (our programmable zero-trust overlay). My job is to keep the docs fresh, easy to consume, and technically accurate. When dealing with cybersecurity, a single stale CLI flag or a misdescribed API endpoint can be the difference between a secure connection and a vulnerability. And in the brave new world of AI, your docs have a new audience: LLMs and agents. If the docs are wrong, the AI will be wrong, which turns documentation debt into systemic risk for users.

With 6+ products spanning dozens of repositories, a 20-to-1 engineer-to-writer ratio, and a startup environment moving at breakneck speed, adaptation is mandatory. Modern developers have already 10x'ed themselves, orchestrating multiple agents to churn out code, features, and new products like never before.

The content orchestrator series

I’m starting this series to document how I’m using every AI tool at my disposal to amplify my output. This isn't about replacing the human; it's about becoming a cyborg writer where the AI handles the manual labor and I supervise, creating guardrails and meaningful direction. The story of the technical writer has gone from writing, to editing, to orchestrating (and still some editing). While we have agents, agency in ourselves has never been more important. Agency in docs (or any product) means owning the lifecycle from blank slate to finished product, from planning to execution, making crucial judgments and fixes along the way. Without AI, you'll be the slowest boat on the water. But without an experienced human at the helm to guide the ship, you'll end up on the rocks.

The doc-check skill

By now you probably know what LLMs are, and subsequently what coding agents like Claude Code do. A SKILL.md file is essentially a custom extension (or file) that defines a specific, multi-step workflow (or context) which turns general-purpose AI into a specialized agent capable of executing complex instructions. This makes it easy to program a complex workflow once, and then Claude can execute it repeatedly and consistently, on command.

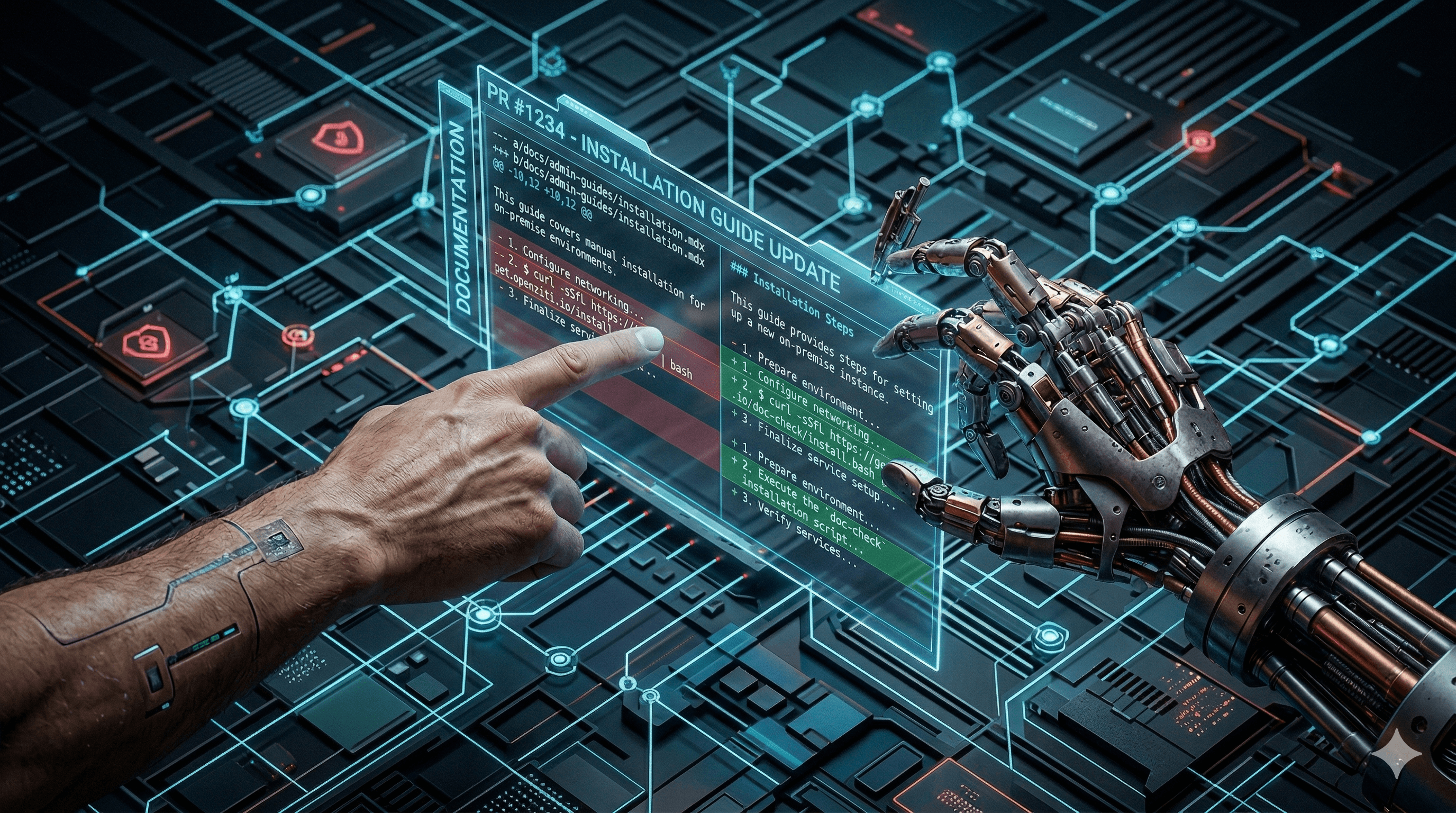

The first major tool I’ve built for my workflow is a Claude Code skill I call doc-check. Its purpose is simple: bridge the visibility gap between what’s happening in our git repositories (we use GitHub/Bitbucket) and what actually exists in our Markdown doc files.

Instead of manually opening every PR and reading every diff, I run a command. It runs across multiple products, some containing multiple repos, then pulls the recent PRs and analyzes their differentials. The skill performs a three-step logic chain:

The differential audit: It fetches merged PRs and filters out the 'noise' (like test updates, dependency bumps, or my own doc updates) to find 'signal' changes like new CLI flags, API endpoints, or config variables.

The grep-powered search: It doesn't guess. It uses

grepto search our actual local documentation directories to see if these new items are already covered, missing, or stale.The report: It gives me an actionable dashboard of exactly where I need to spend my energy, and lets me quickly generate a first draft of the necessary doc updates with a single command.

What the output looks like

Here’s a look at a recent run on our zrok repository. It’s a transparent view of what’s changed and where our documentation lacks freshness.

# doc-check: zrok (since 2026-03-18T17:42:15Z)

5 PRs analyzed · 2 need doc work · 1 already covered · 2 skipped (internal)

## Needs doc work

### #1197 — add docker instance fetcher (merged 2026-03-20) · STALE

- Adds fetch.bash: a new one-liner that downloads only the essential Compose files into a zrok2-instance/ directory without cloning the full repo

curl -sSfL [https://get.openziti.io/zrok2-instance/fetch.bash](https://get.openziti.io/zrok2-instance/fetch.bash) | bash

- The repo's README.md now uses this as the primary install method

- Existing doc: self-hosting/deployment/docker.mdx — Step 1 uses a different curl URL ([https://get.zrok.io/docker](https://get.zrok.io/docker) | tar -xz -C zrok2-instance) that no longer matches the upstream README; the fetch.bash method is not mentioned

- Run /doc-check zrok --draft 1197 to generate a draft

### #1190 — drop public token template; test metrics and dynamic name selection (merged 2026-03-18) · MISSING

- zrok2 admin create frontend command: the <urlTemplate> argument is now optional (was required).

Updated usage: frontend <zitiId> <publicName> [urlTemplate]

- This affects self-hosting operators who configure public frontends

- No existing doc found covering zrok2 admin create frontend or the frontend creation workflow

- Suggested location: self-hosting/ — either a new admin reference page or a step in the deployment guides

- Run /doc-check zrok --draft 1190 to generate a draft

### Already covered

- 1192: start uploading self-hosting linux packages: adds zrok2-controller, zrok2-frontend, zrok2-metrics-bridge to the publish pipeline. self-hosting/deployment/linux.mdx already documents installing all three packages. No doc work needed.

### Skipped (internal)

- 1196: stop publishing container images for PR heads (CI/build change only)

- 1183: concepts round 2 (doc restructuring work — the PR itself is documentation changes)

The reports are also saved to an /output/doc-check folder in case I can't immediately start addressing the doc debt. The skill also saves the last run date, so next time I run a doc-check, it will only check since the last run. If I want to see a status overview of all my products, I run /doc-check status.

More time for manual testing

The best part of this isn't the automated drafting—though the --draft flag does save me a massive amount of time on the first iteration. The real win is that by automating the detective work, I have more time for manual testing.

The best way to understand is to actually do. And because I'm not wasting multiple hours a day auditing PRs or trying to chase down developers to explain changes to me, I can actually run that fetch.bash one-liner from the example above. I can verify the directory structure, hit the API endpoints, and ensure the instructions are bulletproof before they ever hit the site.

The AI gives me the map and the first draft, and then I orchestrate. I edit, format, trim, expand, test, and clarify. I make sure the logic of the doc matches the logic of the software, ensuring new users won't end up at a roadblock. I ensure the UI matches the steps I've created. SMEs will be there for help when needed, and now you'll be much more surgical and precise with your questions, and your developers will thank you for it.

You can check out the skill here: https://github.com/netfoundry/docusaurus-shared/blob/main/skills/doc-check/SKILL.md

If you found this interesting, consider starring us on GitHub. If you haven't seen it yet, check out zrok.io—a sharing solution built on OpenZiti that's completely open source. And if you’re as excited about the intersection of AI and security as we are, help us get the word out about our new MCP gateway (secure access to internal MCP servers without public endpoints) and LLM gateway (a zero-port, OpenAI-compatible proxy for secure model routing). Tell us how you're using OpenZiti on X, Reddit, or over at our Discourse or YouTube.